Oversight is at the heart of AI governance, especially for advanced systems. But “a human is involved” can mean many things – from carefully reviewing every action to occasionally glancing at dashboards. If you don’t define oversight modes clearly, people will assume you have more control than you actually do.

DASUD gives you a structure for making oversight explicit at Design and enforcing it at Use.

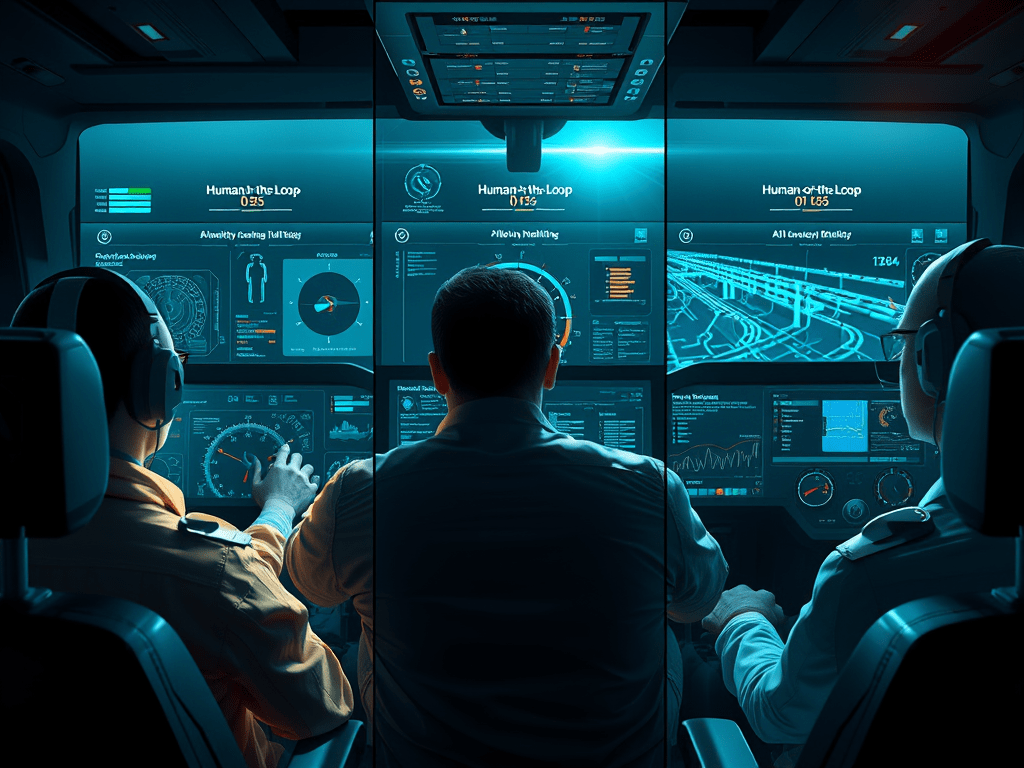

Three oversight modes in simple terms

Let’s define three patterns:

- Human‑in‑the‑loop (HITL) The AI proposes; a human approves before anything high‑impact happens. Think “draft and sign‑off.”

- Human‑on‑the‑loop (HOTL) The AI acts autonomously within boundaries; humans monitor and can intervene. Think “autopilot with pilot present.”

- Human‑off‑the‑loop The AI acts without ongoing human oversight. Suitable only for low‑risk tasks or very mature systems.

These aren’t labels for marketing slides; they must translate into actual workflows and technical controls.

Design: choose oversight mode per use case

At the Design stage, for each AI use case (GenAI, RAG, agents), decide:

- What is the impact if the system is wrong or misbehaves?

- Who is affected – internally, externally, and by how much?

- What is the regulatory and ethical context?

Then, choose:

- HITL for high‑impact actions E.g., sending customer notices, making HR decisions, altering critical system configurations.

- HOTL for medium‑impact, repeatable actions E.g., auto‑classifying tickets, suggesting next best actions for sales reps.

- Automated for low‑impact or purely internal tasks E.g., personal productivity assistants for drafting notes, where errors are easy to correct.

Document this choice and the reasoning behind it.

Acquire/Store: support oversight with data

To make oversight possible, you need the right data:

- Inputs and context What did the system see or retrieve? (prompts, RAG documents, sensor data)

- Outputs and actions What did it propose or do? (drafts, tool calls, state changes)

- Human responses Was an action approved, modified, or rejected?

Store this information with enough structure that you can:

- Reconstruct decisions for audit or investigation.

- Analyse oversight quality (e.g., which reviewers consistently catch errors).

This is where Acquire and Store intersect with oversight: you’re acquiring human feedback and storing evidence of control.

Use: implement HITL and HOTL in workflows

For HITL:

- Ensure every high‑risk action goes through an approval step Technically enforce that certain operations cannot execute without a human signal.

- Make review easy and meaningful Provide clear summaries, highlight what’s new or changed compared to prior states, and show consequences of approval.

- Prevent rubber‑stamping You can require minimal reasoning notes for approvals, or sample and review approvals to ensure they’re thoughtful.

For HOTL:

- Provide dashboards and alerts Show trends, anomalies, and key metrics (error rates, incident counts, unusual patterns of tool‑use).

- Define intervention mechanisms Make it possible to pause systems, roll back changes, or switch modes quickly if issues arise.

Oversight is not just a checkbox; it’s a designed user experience.

Delete: oversight records and learning

Oversight generates artefacts:

- Logs of approvals and overrides.

- Incident reports.

- Feedback data from reviewers.

Decide:

- How long to retain oversight records. They may be critical for regulatory defence or internal accountability.

- How to use them in learning Feed them into model improvements, prompt tuning, or policy changes—but with privacy and bias considerations.

- When to “forget” Eventually, some detailed oversight records may no longer be needed; balance audit needs with minimisation.

Make it concrete

For one AI use case in your environment:

- Classify it as HITL, HOTL, or automated.

- Document the reasons and share them with stakeholders.

- Specify what evidence must be captured to support that oversight mode.

- Inspect actual workflows to confirm the oversight pattern is truly implemented.

With explicit oversight modes running through DASUD, you move from “trust us, humans are involved” to “here is exactly how and where humans are in control.”

If you’d like assistance or advice with your Data Governance implementation, or any other topic (Privacy, Cybersecurity, Ethics, AI and Product Management) please feel free to drop me an email here and I will endeavour to get back to you as soon as possible. Alternatively, you can reach out to me on LinkedIn and I will get back to you within the same day!